The pharmaceutical industry has spent the better part of a decade building what it calls omnichannel commercial capability. Dedicated teams, new technology platforms, personalisation strategies, next-best-action engines. The investment has been significant. The results have been, to be generous, mixed.

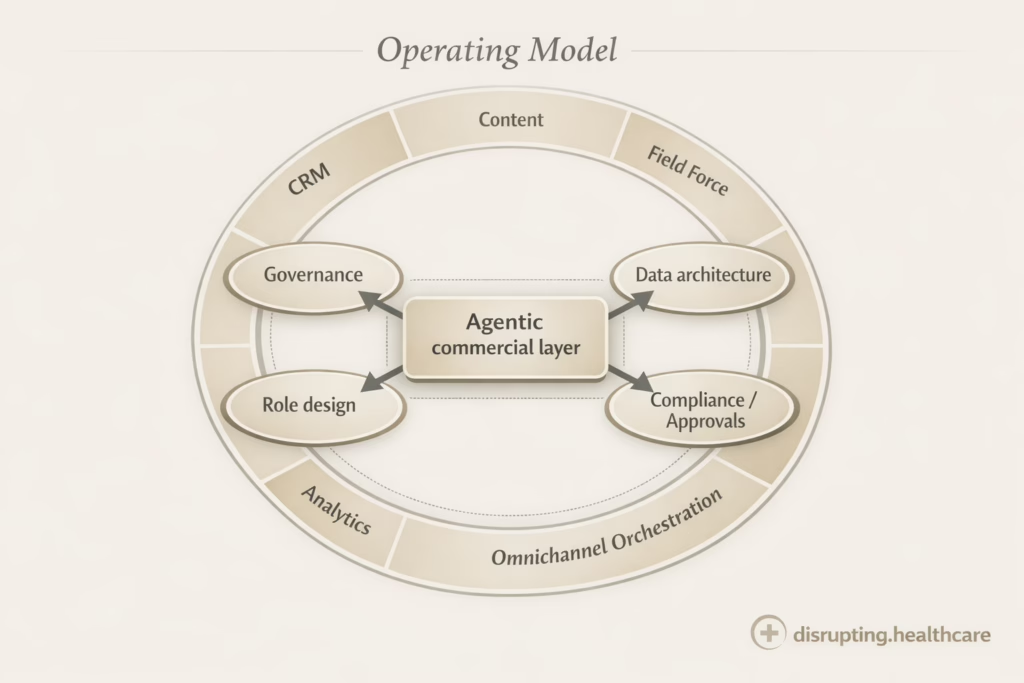

Agentic AI in pharma commercial is being pitched as the fix for omnichannel HCP engagement. But the real question is not whether the technology works. It is whether the life sciences commercial operating model can handle systems that act across channels, content and workflows rather than simply suggesting the next best move.

What Is Agentic AI in Pharma Commercial?

Agentic AI is an AI that does not just generate content or answer prompts, but can plan, decide and take actions across defined workflows such as insights generation, content operations, field support or campaign orchestration.

In life sciences commercial operations, it acts more like a governed digital teammate: connecting data, tools and rules to execute tasks with less manual coordination, while still operating under human oversight, compliance controls and clear escalation points.

The problem is not the technology. Agentic systems can do what they claim. The problem is that most commercial operating models cannot absorb what these systems require to function. The data architectures are wrong. The decision rights are unclear. The roles were not designed around continuous, machine-executed engagement.

Before your organisation can benefit from agentic AI, it needs to reckon with some structural realities. Not later but before the contract is signed.

Agentic AI does not make omnichannel smarter. It exposes every structural flaw that was already there.

What the hype says vs. what reality looks like

What the demos promise

- Personalised HCP engagement at scale

- Real-time next-best-action decisioning

- Seamless omnichannel orchestration

- Autonomous execution without manual intervention

- Dynamic content optimisation across touchpoints

- Unified customer view across all channels

What commercial ops actually looks like

- Fragmented CRM data updated quarterly

- Decision rights owned by committees, not systems

- Channel teams operating in separate silos

- Approval workflows designed for batch campaigns

- Content libraries that are months out of date

- Identity resolution that fails on a significant share of records

What “agentic” actually means at the commercial operating layer

Goal-driven

An agentic system receives a defined objective — increase engagement quality with this HCP segment — and selects its own actions to pursue it. It does not wait for instructions on which channel to use, which message to send, or when to act.

Persistent

Unlike a one-time recommendation engine, an agentic system maintains state. It tracks what it has tried, adapts based on outcomes, and continues operating between human reviews. This persistence is what makes it powerful and what makes governance hard.products and services.

Operationally connected

Agentic AI is not a standalone analytics tool. It needs read and write access to your CRM, your content management system, your channel execution layer, and your consent infrastructure. If those systems are not integration-ready, the agent cannot act.

Three structural preconditions

Decision rights built for machines, not campaigns

Most commercial organisations allocate decision authority through campaign processes. A brand team decides the message. A medical team approves the content. An operations team configures the channel. A compliance team signs off. Each step takes days or weeks.

Agentic AI breaks this model. When a system makes hundreds of micro-decisions per day — which email to send, whether to trigger a field alert, how to sequence a digital touchpoint — the campaign approval cycle becomes an immediate bottleneck.

Decision rights need to be redesigned before you deploy. This means defining which decisions the agent is authorised to make autonomously, which require human confirmation, and which are off-limits entirely. This is not a technology configuration. It is an organisational design question.

What this means in practice: You need a decision authority matrix that covers machine-executed actions, not just campaign-level approvals. Legal and medical review processes need to be rearchitected around rule sets and thresholds, not document-by-document sign-off.

Data architecture built for continuous decisioning

Next-best-action systems were built to work with periodic data snapshots. A weekly sync from the CRM, a monthly update from the data warehouse, a quarterly refresh of the segmentation model. This cadence was acceptable when humans were making decisions once a week.

Agentic systems make decisions continuously. They need current data. If a physician attended a symposium yesterday, an agentic system should know by this morning. If a digital engagement triggered a CRM update three hours ago, the agent’s next action should reflect it.

Most pharma commercial data architectures are not built for this. They have latency baked into every pipeline. Fixing this is not a small project. It requires rethinking how data flows from source systems into the commercial decisioning layer.

What this means in practice: Audit your data latency before you evaluate agent platforms. If your CRM sync runs weekly, no amount of sophisticated AI will give you the continuous decisioning capability the demos show. The data infrastructure is the long pole in the tent.

Role design built for systems, not isolated launches

Commercial teams were built around campaigns and launches. Brand managers own the launch plan. Medical affairs manages KOL relationships. Field force owns the territory. Digital owns the channel. Each role has a defined lane and a defined moment of accountability.

Agentic AI doesn’t operate in campaigns. It operates continuously, across lanes, across moments. When the system decides to suppress a field visit because digital engagement metrics are high, who owns that call? When the agent deprioritises a brand because another product shows higher engagement propensity, who is accountable?

Organisations that deploy agentic AI without redesigning roles will experience conflict, not efficiency. The system will make decisions that no one’s job description covers, and the organisation will route around it rather than with it.

What this means in practice: Define the agent owner role before deployment. This is not the platform administrator or the data scientist. The agent owner is accountable for the commercial outcomes the system drives and has the authority to adjust its operating parameters.

Who is building toward this — and what they are actually saying

Sanofi

Sanofi is among the most transparent large pharma organisations about its AI commercial investments. Its Turing platform is designed to drive personalised HCP engagement by connecting data signals across channels and triggering next-best-action recommendations at scale. The plai initiative — Sanofi’s real-time, company-wide data layer — is explicitly positioned as the infrastructure layer that makes continuous decisioning possible.

At VivaTech 2025, Sanofi’s leadership addressed the organisational dimension directly. The message was not that AI replaces the commercial model. It was that AI requires the commercial model to change. Investment in data infrastructure, role redesign, and new approval and execution processes were presented as prerequisites, not afterthoughts.

Sanofi is still building toward the operating model these ambitions require. But the framing is notably more honest than most vendor presentations: the constraint is not the technology. It is the organisation.

Sources: Sanofi Digital & AI · Sanofi VivaTech 2025

Novartis

Novartis has articulated a responsible AI framework that is worth reading alongside any vendor proposal for agentic commercial deployment. Their approach distinguishes between AI systems that assist human decision-making and AI systems that execute decisions — and places explicit governance requirements on the latter category.

The distinction matters commercially. Agentic systems sit in the execution category. They are not generating a recommendation for a sales rep to act on. They are acting. Novartis’s framework asks: who is accountable when the system is wrong? Who defines the guardrails? Who can halt the system if something is not working?

These are not regulatory compliance questions. They are operating model questions. The organisations that answer them clearly before deployment will have a measurable advantage over those that answer them after something goes wrong.

Source: Novartis Responsible AI

Watch Sanofi VivaTech 2025 video: All in on AI – How to Change an Organization with AI:

The governance question nobody is asking yet

The industry conversation about agentic AI governance has focused almost entirely on regulatory compliance — data privacy, off-label risk, transparency requirements. This is necessary. It is not sufficient. The operational governance question is different, and most organisations have not answered it yet. When a system is making hundreds of commercial decisions per day on behalf of your organisation, the governance structure that managed a dozen campaign approvals per quarter is not fit for purpose.

Who owns decision logic?

The rules and parameters that define what the agent will and will not do need an owner. Not a committee — an accountable individual with the authority to change them.

Who sets thresholds?

Every agentic system operates within thresholds — when to escalate, when to suppress, when to act. These are commercial and clinical judgements, not IT configurations.

Who monitors drift?

Model drift, data drift, and outcome drift are all real risks in deployed systems. Who is watching, on what cadence, with what authority to act when drift is detected?

Who can shut the agent down?

This is not a catastrophe question. It is a day-to-day operations question. If the system starts behaving in ways that conflict with commercial strategy, the answer cannot be “file a ticket.”

The next collision: 30 to 50 parallel pilots

The current state of agentic AI in pharma commercial looks like this: dozens of pilots running simultaneously, often without awareness of each other, each optimising for a different objective, all pointing at the same HCPs.

One pilot is optimising email engagement. Another is managing rep scheduling. A third is running digital ad sequencing. A fourth is handling medical information follow-up. Each has its own data feed, its own success metric, its own oversight structure — or none. The HCP on the receiving end experiences this as noise. The commercial team experiences it as conflicting priorities and finger-pointing when channel budgets don’t reconcile.

- Data ownership conflicts emerge when multiple pilots need the same underlying HCP data with different update frequencies and transformation logic.

- Channel conflicts arise when two pilots are simultaneously trying to influence the same HCP touchpoint.

- Governance gaps accumulate as each pilot is owned by a different team with a different approval framework.

- Success measurement breaks down when pilots use incompatible metrics and attribution models.

- Scaling becomes impossible when each pilot has been built as a one-off rather than as a module in a shared system.

The answer is not to slow down on pilots. The answer is to build the operating model infrastructure that turns pilots into a coordinated portfolio.

What to build before you buy

The organisations that will benefit most from agentic AI in commercial are not the ones racing fastest to the first deployment. They are the ones investing in the operating model changes that make any deployment sustainable.

Three things to build before you sign the next agentic AI contract: a decision authority framework that explicitly covers machine-executed decisions; a data architecture review that maps current latency against what continuous decisioning actually requires; and a role design process that defines who owns agent outcomes and has the authority to change agent behaviour.

None of this requires waiting. Each can be started now, in parallel with technology evaluation. Starting with operating model design also significantly improves technology selection — because you know what you actually need the system to do, and what infrastructure you have to support it.

The organisations that benefit most from agentic AI will not be the ones with the best models. They will be the ones with the most coherent operating model.

Frequently Asked Questions about Agentic AI

What is agentic AI in life sciences commercial?

Agentic AI refers to AI systems that pursue defined objectives autonomously — selecting actions, executing them, and adapting based on outcomes — without waiting for human instruction at each step. In life sciences commercial, this means systems that can decide which channel to use, which message to send, and when to act across HCP engagement programmes, rather than simply generating a recommendation for a human to action.

How is agentic AI different from next-best-action in pharma?

Next-best-action systems generate a recommendation — they tell a sales rep or marketing team what to do next. Agentic AI executes the action itself. This distinction has significant consequences for governance, data requirements, and role design. A recommendation system fails quietly; an autonomous system acts, which means its failure modes are operational, not just analytical.

What operating model changes are required before deploying agentic AI in pharma commercial?

Three structural changes are required before agentic AI can function effectively in pharma commercial: first, decision rights need to be redesigned so that machine-executed micro-decisions are explicitly authorised rather than left in a governance grey zone; second, data architecture needs to support continuous, low-latency decisioning rather than periodic batch updates; third, commercial roles need to be redesigned around agent ownership and outcome accountability, not campaign execution.

Why do pharma commercial operating models struggle with agentic AI?

Most pharma commercial operating models were designed around campaign cycles, not continuous decisioning. Approval workflows, data pipelines, and role structures all assume that a human is reviewing and authorising each significant action. Agentic AI makes hundreds of decisions per day across channels and HCP segments. The campaign-era operating model creates bottlenecks, governance gaps, and role conflicts that prevent the system from functioning as designed.

What is the agent owner role in pharma commercial?

The agent owner is the individual accountable for the commercial outcomes driven by an agentic AI system. This role is distinct from the platform administrator (who manages the technology) and the data scientist (who builds and monitors models). The agent owner defines operating parameters, has authority to adjust decision logic and thresholds, and is accountable when the system’s behaviour conflicts with commercial strategy. Most pharma commercial organisations have not yet defined this role.

How does data latency affect agentic AI in pharma commercial?

Agentic AI requires current data to function effectively. If a physician attended a medical symposium yesterday, the system should reflect that by this morning. If a digital interaction triggered a CRM update three hours ago, the agent’s next action should account for it. Most pharma commercial data architectures run on weekly or monthly sync cycles, which means the agent is always working with stale information. Auditing and reducing data latency is a prerequisite for effective agentic deployment, not a follow-on optimisation.

What governance questions should pharma companies answer before deploying agentic AI?

Four governance questions are critical before deployment: Who owns the decision logic — the rules and parameters defining what the agent can and cannot do? Who sets the thresholds that determine when the agent escalates, suppresses, or acts? Who monitors for model drift, data drift, and outcome drift, and on what cadence? And who has the authority to shut the agent down if its behaviour conflicts with commercial strategy? Without clear answers to these questions, agentic deployment creates accountability gaps that become operational problems.

Further reading and sources

- Sanofi Digital & AI strategy

- Sanofi VivaTech 2025 — AI at scale summary

- Sanofi VivaTech 2025 — All in on AI: How to Change an Organisation With AI (YouTube, June 12, 2025)

- Novartis Responsible AI framework

- McKinsey & Company — Agentic AI in life sciences: charting a path forward

This content has been enhanced with AI